BC Law AI News & Insights: February 2026 Edition

In this newsletter:

- A practical exercise for building reusable AI expertise

- February AI Test Kitchen sessions

- BC Talks AI call for proposals

- The latest from OpenAI, Google, and Anthropic

AI Test Kitchen – February Sessions

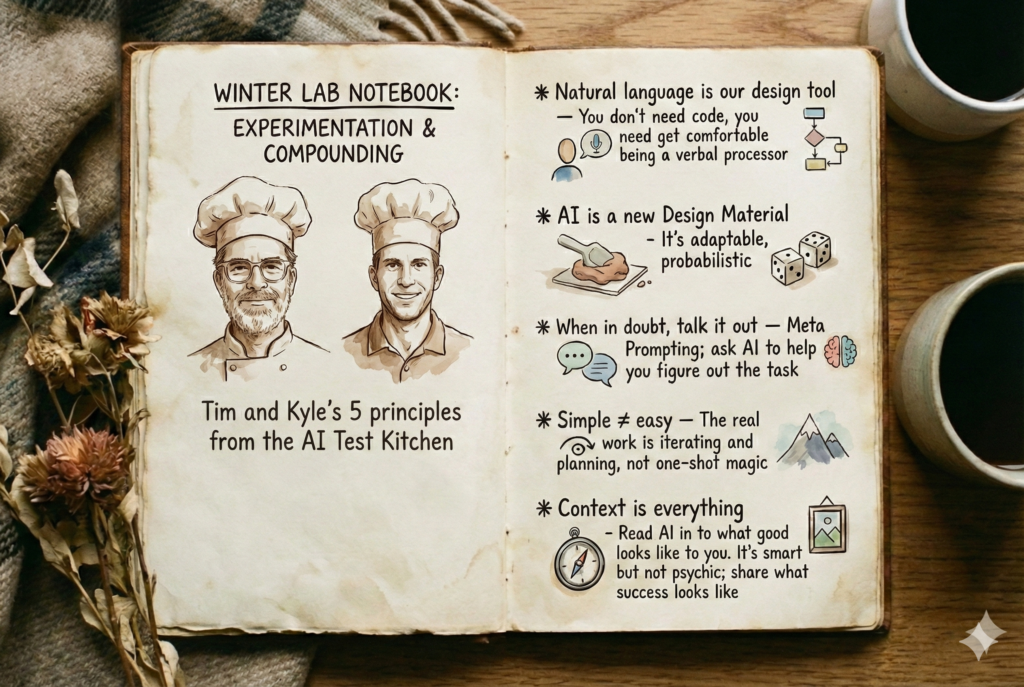

The Center for Digital Innovation in Learning and BC Law Library are hosting a new round of AI Test Kitchen workshops. These hands-on sessions are designed for experimentation—identify tasks that could use AI support, build a custom assistant, test it, and iterate.

All sessions online via Zoom, 12:00-1:30 pm:

- Thursday, February 5

- Thursday, February 12

- Thursday, February 19

Facilitated by Tim Lindgren (CDIL) and Kyle Fidalgo (Law Library).

BC Talks AI – Save the Date

Wednesday, May 13 — BC’s full-day AI event for faculty and staff returns, focused on generating forward momentum in understanding AI and its thoughtful implementation at Boston College.

Proposals due today! The Campus AI Steering Committee is accepting proposals that align with strategic AI themes. Priority goes to proposals sharing work that’s been completed or is currently in place—exactly the kind of practical experience this newsletter has been encouraging.

- Proposal Deadline: February 2, 2026 (today)

- Decisions: February 23, 2026

- Submit: Proposal Form

Registration opens in March. More details: BC Generative AI Events

Winter Workshop Series – Resources Available

The January Ed Tech workshop series has wrapped, but all resources remain available. If you missed a session or want to revisit the materials:

- Getting Things Done With AI — Lab-style sessions for putting AI to work on your actual tasks

- Building a Resource Guide Assistant — Creating low-stakes assistants that answer questions using your materials

- Creating Course Resources With AI — Using AI to jump-start simulations, exercises, assessments, and discussion prompts

- Course AI Policy Considerations — Practical frameworks for course AI policies

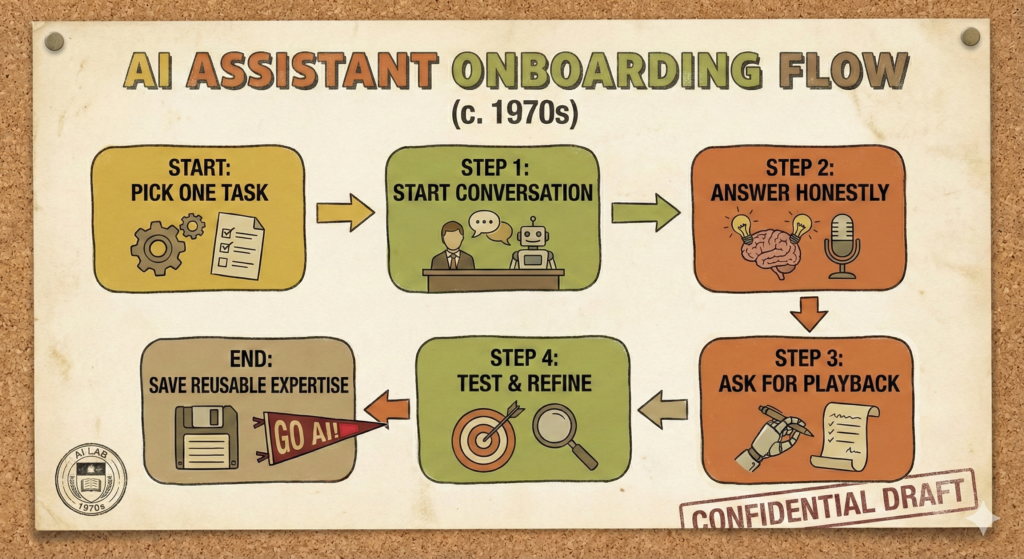

Prompting Tip: Onboard Your AI to One Task

The skills that make you effective with AI are the same collaboration and management skills that work with humans—articulating goals, providing context, describing quality, giving feedback—and you can start building reusable expertise by documenting your standards in plain text. Here’s an exercise to put that into practice.

The premise: Imagine you’re onboarding a sharp, eager new colleague to handle one specific part of your job. They’re competent and willing, but they know nothing about your role, your standards, or the way you like things done. How would you get them up to speed? Another way to put this, how would you describe what you know now about your role to a beginner version of yourself just getting started in the position?

Pick one task — something you do regularly that could benefit from an extra set of hands. Not your entire job. One workflow, one recurring deliverable, one process.

Then try this:

- Start a conversation with your AI tool of choice (Gemini, ChatGPT, Claude — any of them work). Tell it: “I want to teach you how I handle [your task]. Interview me — ask me questions about what this task involves, what good output looks like, what mistakes to avoid, and what context you’d need to do it well.”

- Answer its questions honestly. This is where the real value is. The AI will ask you things you haven’t had to articulate before — the implicit knowledge you carry around but have never written down. What does “good enough” look like? What are the edge cases? What do you check before you consider it done?

- Ask it to play it back. Once you’ve walked through it, ask: “Based on what I’ve told you, write up a clear set of instructions that someone new could follow to handle this task to my standards.” Review what it produces. Edit it. Fill in gaps.

- Test it. Give the AI a real (or realistic) instance of the task and have it follow the instructions you just co-created. See where the output hits and where it misses. Refine.

The goal isn’t a perfect result on the first try — it’s surfacing the tacit expertise you carry and turning it into something explicit and reusable. What you end up with is a set of instructions that works for AI collaboration, but honestly, it would work just as well for onboarding a human colleague. That’s the point.

If you want to take it further, save those instructions as a custom GPT, a Claude Project, or a Gemini Gem. Now you’ve got a reusable starting point for every time you need help with that task.

One final note : If you feel as though it’s hard to keep up with developments in AI, you’re in good company. Even Andrej Karpathy feels behind. The reframe that’s helping me: stop worrying about keeping up with AI and focus on the human side. Can you articulate what you want clearly? Can you describe what good looks like? Those are the durable skills that won’t go out of style.

Model & Feature Updates

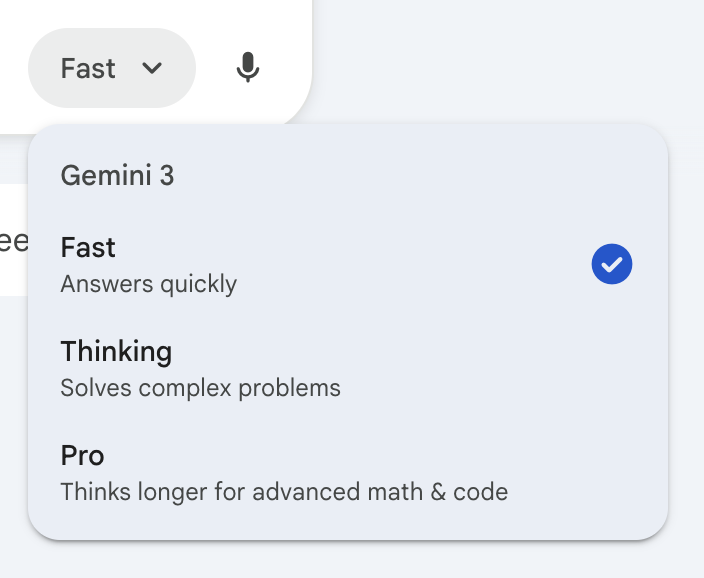

Gemini 3 Flash is now live. Also, since the launch of Gemini 3 it is not immediately clear which model you are interacting with in the Gemini app. A quick reference for picking the right choice via the model picker:

- Fast = Gemini 3 Flash

- Thinking = Gemini 3 Flash (with thinking)

- Pro = Gemini 3 Pro (with thinking)

For most work that you do, selecting “Thinking” or “Pro” will get you the best results. For your harder problems, coding and research assistance, enhanced reasoning and planning capabilities make sure that “Pro” is selected.

Agentic Vision, a recently announced capability in Gemini 3 Flash, combines visual reasoning with code execution to ground answers in visual evidence.

OpenAI

GPT 5.2 continues to push benchmarks . An update to the recent GDPval testing shows a 70.9% win rate against human experts on economically valuable tasks. This crosses an important threshold that suggests GPT5.2 can reliably save both time and costs, when compared to expert humans, for a range of professional tasks.

Other updates:

- Shareable projects now available.

- Prism , a free workspace for scientific writing and collaboration is now available for ChatGPT users with a personal account.

Anthropic

Claude Opus 4.5 hit another milestone: it successfully completed one of Anthropic’s notoriously difficult take-home technical evaluations . The provocative framing from their team: “Given enough time, humans still outperform current models.” This subtle statement suggests that the script is flipping—we used to say that about AI.

Claude Constitution — Anthropic published what was previously known as Claude’s “soul document”—the values and guidelines that shape how Claude responds and the kind of entity they would like Claude to be. It’s one of the most transparent looks at how model companies write documents that train AI model behavior.

Cowork in research preview —is an interface for agentic workflows for non-technical users. Simply describe an outcome and Claude will take on complex, multi-step tasks coming back to you with completed work.

Curated Links

Interesting Conversations, Essays, and How-Tos from AI thought leaders, explorers and tinkerers

- A.I. Changed My Classroom. But Not for the Worse. – Boston College English professor Carlo Rotella on responding to AI by “doubling down on the humanity of the humanities.” A thoughtful, essential take from one of our own.

- What’s Really Going On with AI and Jobs – The Center for Humane Technology examines how AI affects the “entry-level” tasks essential for training future experts—a critical perspective for anyone involved in professional development and student career readiness.

- New Study: AI, Automation, and Expertise – An analysis of hundreds of millions of job ads across 39 countries to understand how AI is changing labor markets around the world.

- Two topics of interest from a recent Artificial Intelligence Show Podcast : using AI for course creation ) and a discussion regarding the growing disconnect between employees and leadership on AI adoption .

- Simon Willison’s Year in LLMs – A comprehensive review of 2025’s major shifts, from reasoning models to the mainstreaming of command-line AI tools.

- Compound Engineering – Lessons from coding with AI that you can apply to any knowledge work. Every’s methodology: Plan → Work → Review → Compound. Each collaborative effort with AI ideally makes the next one easier.

- Management as AI Superpower – Ethan Mollick argues that the skills required for effective human delegation—scoping problems, defining deliverables, providing feedback and recognizing quality—are exactly what’s needed to master AI.

- What makes an AI-Forward firm ? – A Harvard Business professor explains the organizational and cultural hurdles that prevent institutions from becoming “AI-first.”

- Anthropic’s AI philosopher, Amanda Askell’s AMA and appearance on The New York Times Hard Fork Podcast – Insights into the ethical and philosophical frameworks used to shape model behavior.

- The adolescence of technology – A high-level confrontation of the risks and societal impacts of powerful AI models.

- How we frame machines – A practical reflection from an educator on building a custom AI character to pressure-test a presentation and sharpen his own thinking.

- Custom Instructions for Higher Education – A look at how educators are leveraging the adaptability of AI tools to create more composable, personalized learning environments.

- Thoughts on AI Hyperproductivity – An examination of “compounding teams” that use AI to build bespoke tools, creating a flywheel of exponential productivity.

- Students should be using AI to push, not replace their thinking – Introduces the framework of “possibility literacy,” helping students navigate the “productive paradoxes” of AI to enhance human agency rather than diminish it.

- Protect Teaching Expertise in the Age of AI – Offers an “internal compass” for educators, providing principles to ensure AI acts as pedagogical scaffolding while safeguarding the authority, purpose, and uniquely human expertise that defines great teaching.

Have questions or ideas? Want help creating your own AI workflows? Reach out to Kyle Fidalgo @ atrinbox@bc.edu.

Ready to build your AI competency? Discover AI literacy resources at AI Foundations.